Microfrontend optimization is not just smaller bundles

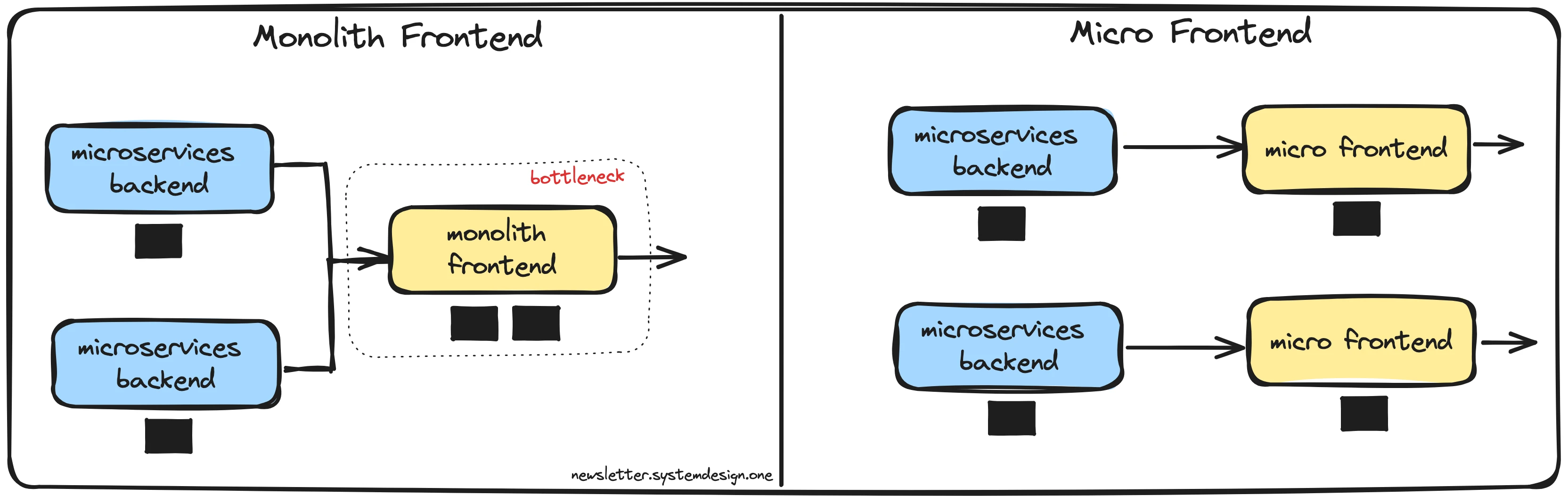

The more I looked into it, the more obvious it became that in a microfrontend architecture, optimization is not really just about making bundles smaller

When I started looking into microfrontend optimization, I thought it was going to be a pretty standard frontend performance task.

Check bundle sizes. Tweak webpack. Split chunks better. Minify more. Improve caching. Done.

And yes, that was part of it. But the more I looked into it, the more obvious it became that in a microfrontend architecture, optimization is not really just about making bundles smaller.

It is about the whole system.

A smaller bundle helps, obviously. But once you have multiple remotes, shared dependencies, and different apps running together, the problem stops being only “how do I reduce JS size?” and becomes more like “what is this system actually doing when everything is loaded together?”

What I was trying to improve

At a high level, I was looking at a few things:

- reduce bundle sizes

- improve caching

- avoid duplicated dependencies across remotes

- understand some runtime issues I was seeing

Pretty normal goals.

The only difference is that with microfrontends, those goals are a bit more deceptive than they sound. You are not optimizing one app in isolation. You are optimizing a setup where several apps need to coexist, share code, and not step on each other.

That changes the game a bit.

First step: look at the build

The first thing I checked was the obvious stuff.

I used Bundle Analyzer, looked at webpack optimization settings, compared production output before and after, and tested different scenarios like running only the main app vs loading it together with other remotes.

Things like splitChunks, runtimeChunk, Terser, and concatenateModules were all part of the process.

And to be fair, those changes did help.

The main bundle got smaller. The build output looked healthier. Caching was easier to reason about. In general, it felt like the app was moving in a better direction.

So from a pure build-output point of view, that part was working.

But that is also where I started noticing that bundle size was only one piece of the problem.

Where it got more interesting

The more interesting part was dependency sharing.

This is probably one of those things that sounds simple when you first hear it. You see shared dependencies in Module Federation and think, nice, we will avoid loading the same libraries multiple times.

In practice, it is not that clean.

I was looking at libraries like React, React DOM, Apollo Client, dayjs, styled-components, and MSAL. These are not tiny dependencies, so sharing them properly matters. And in theory, some of them should be straightforward singletons.

But “shared” does not automatically mean “solved.”

Even with shared config in place, things can still look duplicated or at least behave in ways that make you question whether everything is actually being reused the way you expect. It only takes some version mismatch, packaging difference, or configuration detail for things to get messy.

That was one of the biggest things I took from this: in microfrontends, the idea of sharing dependencies is simple, but the reality is much more fragile.

Smaller bundles do not mean the whole app is faster

This was probably the part I found most interesting.

While I was looking at build optimization, I also had some widgets that seemed to be rendering more times than they should. So now the conversation was no longer only about bundle size, because even if you make the build cleaner, runtime performance can still eat away at those gains.

And I think this is where a lot of frontend optimization discussions become too narrow.

People love talking about bundle size because it is measurable and easy to point at. You can literally open a report and say “this got smaller.” That is useful. But if components are still re-rendering too often, if props are flowing in ways that trigger unnecessary work, or if multiple remotes together create more overhead than expected, then the actual user experience is still paying for that.

So the more I looked into it, the less I saw this as a webpack-only exercise.

It became more about boundaries, how remotes interact, what gets loaded when, and whether the runtime behavior matches the architecture you think you have.

The main thing I took away

I think the main takeaway for me is that microfrontend optimization is a system problem much more than it is a config problem.

Webpack matters. Build settings matter. Chunking matters. Shared dependencies matter.

But none of those things on their own tell the full story.

You have to look at the system as a whole:

- what happens when one remote runs alone

- what changes when several remotes are loaded together

- which dependencies are actually shared vs only expected to be shared

- how much runtime overhead exists on top of the build output

That is the part that made this more interesting than I expected.

I went into it thinking I was going to tweak some optimization settings and move on. Instead, it ended up being a reminder that performance work in microfrontend systems is mostly about understanding tradeoffs and interactions.

Not just reducing numbers in a bundle report.

One last thing

So yes, smaller bundles are good.

But that is not really the full story.

In a microfrontend setup, optimization is less about finding one clever webpack setting and more about understanding how the whole thing behaves once real remotes, real dependencies, and real runtime behavior enter the picture.

That is what makes it harder.

And honestly, that is also what makes it more interesting.